On the Cutting Edge at CES 2019, Part II

This is part 2 of our ongoing recap from CES. Visit our recap of Day 1.

Our wily Creative Technologist, Rob Hudak, was back for day 2 of the Consumer Electronics Show (CES) to see what’s up-and-coming in the tech world, and how we can best use it to help our clients.

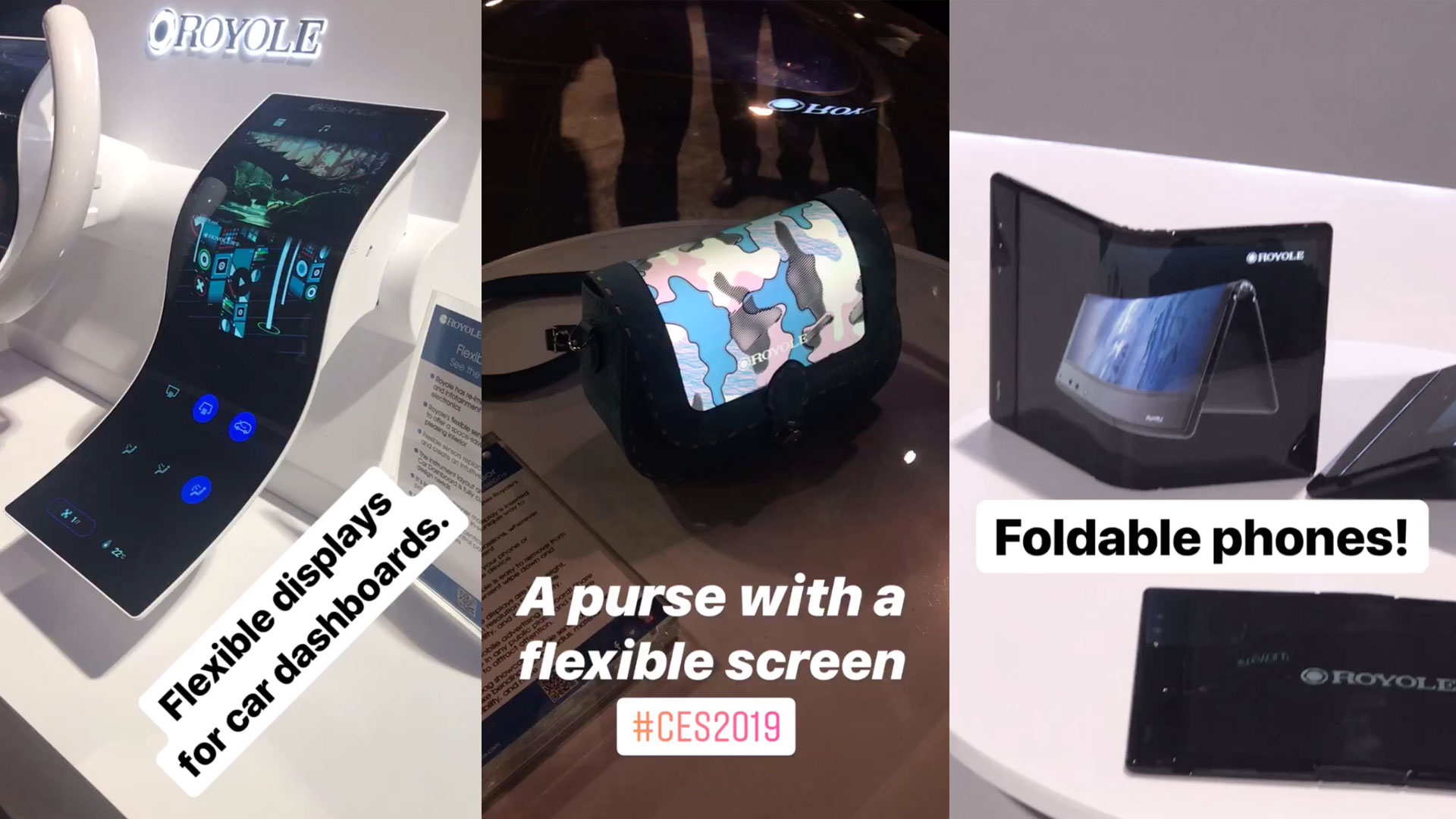

Online chatter has centered around flexible screens – particularly the Royole FlexPai Foldable smartphone/tablet – as well as jewelry made from recycled electronic components.

On the ground, Rob dove deeper into the relationship between artificial intelligence and humans, and how motion tracking and facial recognition are being used to make a huge impact on a variety of disparate fields.

Rob, take it away:

Baby C3P0-type robots from Avatar Mind

Baby C3P0-type robots from Avatar Mind

There is a fear among the tech elite that robots we build could inherit our biases and become superior to us and, well, the rest is for dystopian science fiction fantasy. But at CES, these robots not only look super friendly, some of them seem even downright helpful.

The world has had this idea of helpful robots since “The Jetsons” aired in 1962. The futuristic Jetson family’s robot maid, Rosie, was every 1960s homemaker’s dream – but we don’t even quite have a Rosie yet. It took much longer to get to the Roomba than it did to get to the moon, but perhaps if JFK told the USA “before this decade is out, we will have a robot maid in every household,” we would live in a very different place.

In the spirit of progress, CES is displaying baby steps toward all-around helpful robots. Delivery Robots are arriving on the scene, in the form of large autonomous trucks and vans and in smaller form, like the Snackbot from Robby Industries. PepsiCo actually launched the Snackbot food and beverage delivery robots on the campus of University of the Pacific in Stockton, California. Students who are busy studying and need a snack could download the Snackbot app, place an order and the Snackbot brings it to them. Einride recently partnered with Ericsson and Telia on 5G to bring their autonomous, all-electric trucks to market.

The Snackbot by Robby Industries and the Einride self-driving transport truck.

The Snackbot by Robby Industries and the Einride self-driving transport truck.

Rosie could do a lot, but I can’t remember an episode of The Jetsons where the family played a game of ping-pong with Rosie. So, I was pretty blown away when I saw the ping-pong playing robot that Omron used to attract people to their booth. It was a fun and impressive way to get people to talk about how robots like these can be used in manufacturing. And with AI and 5G implemented, a company can power their entire robotic factory on a central “brain.” If something goes wrong on the line, the robot will see it with the sharp eye of a ping pong player and notify the rest of the bots in the factory to change gears, so to speak.

A robot that plays a good game of ping pong, made by Omron

A robot that plays a good game of ping pong, made by Omron

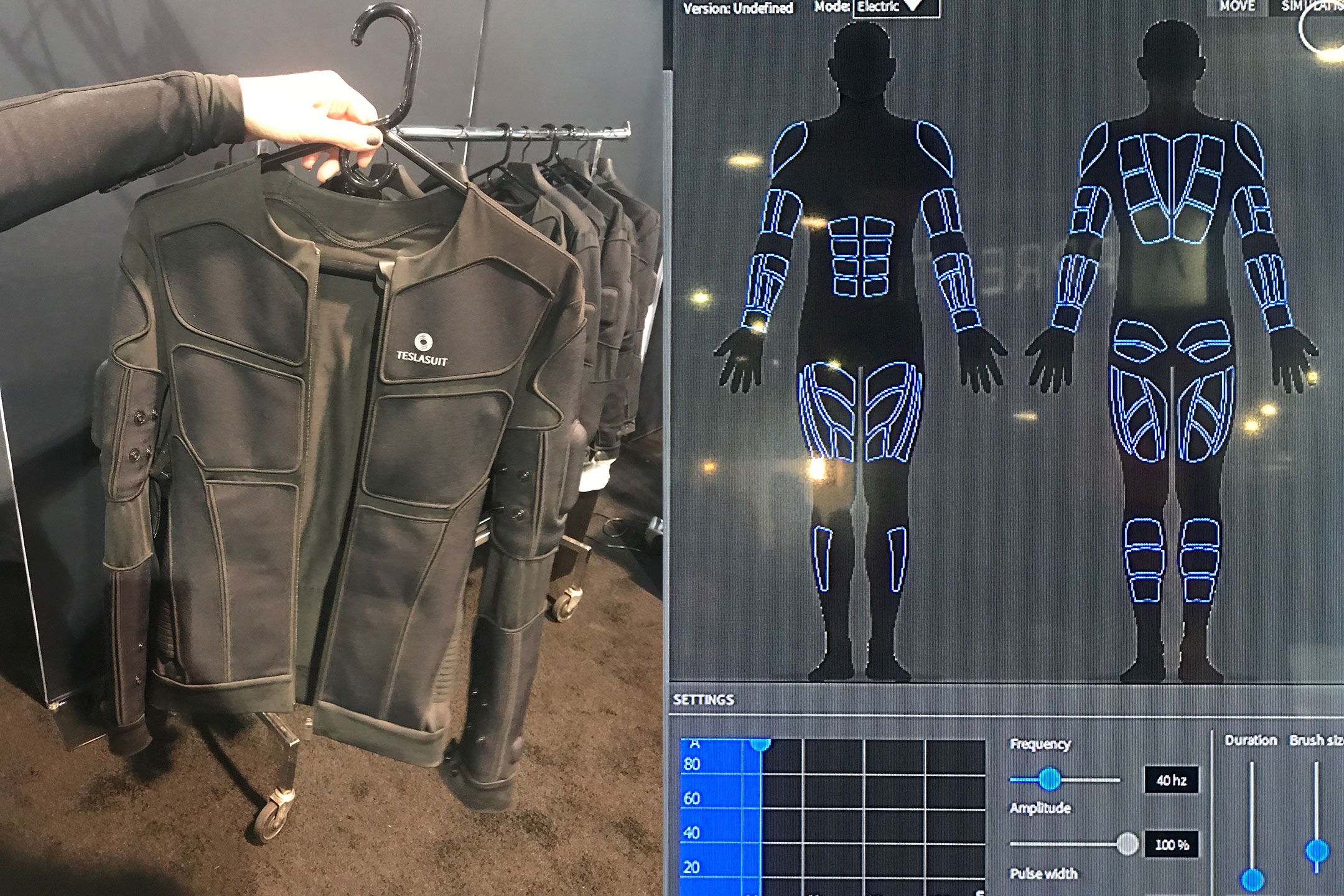

Technology is also taking a more inward look at movement. One example is the haptic suit, a suit developed by Teslasuit that tracks body’s motion and biometrics. These haptic suits are being used for training purposes, with use cases in fields as diverse as athletics and oil and gas. The suit not only receives input to track your movement, it also transmits signals to warn your body of poor form, so you can adjust and improve your muscle memory on the fly. Imagine a golfer wearing one of these to practice their swing - the suit would send feedback to fix errors in their swing in real time.

Haptic suit by Teslasuit

Haptic suit by Teslasuit

We are also getting to know our faces better by tracking their expressions and programming AI to react in ways that can help us. It seemed that everywhere I turned, a screen was tracking my face with a camera, telling me I was a male, age 30-40, and that I was either happy, sad or surprised. This combo of facial expression recognition with AI is also being used for safety and entertainment in automobiles. If a driver’s eyes close, or their head dips, it senses the driver is sleepy and sounds an alert to help wake them up. A Toyota demo showed a car interior that sprayed aromatherapy scents and gave massages to help create a better ride for the passenger if their facial expression showed sorrow.

Creative Technologist, Rob Hudak

Creative Technologist, Rob Hudak

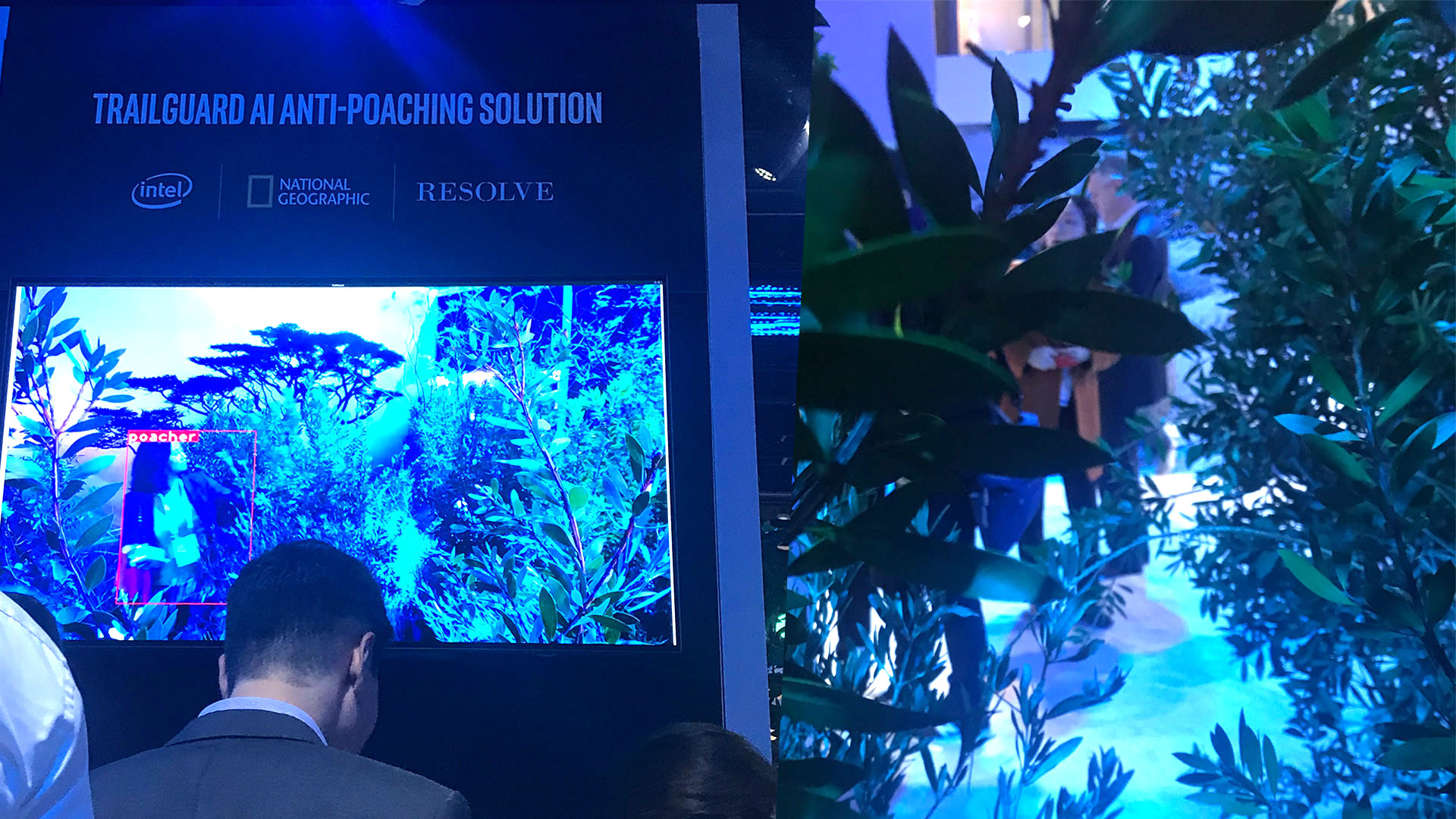

Tracking and processing movement with AI is also helping to save elephants from illegal poachers in Africa. Around 35,000 elephants are killed in Africa each year by poachers. That makes for one elephant every 15 minutes being poached for its ivory tusks. Motion tracking and AI are now being used to detect poachers using cameras that can sense the difference between human and animal movement. They signal the rangers whose job it is to catch the poachers and the rangers take care of things from there. Intel and National Geographic had a demonstration that allowed you to enter the jungle and be detected. Easy enough to demonstrate, for sure, but it’s the real deal. 100 of these cameras have already been deployed in the jungle and are helping save elephants and catch poachers right now.

Trailguard AI Anti-poaching Solution at the Intel booth for CES 2019

Trailguard AI Anti-poaching Solution at the Intel booth for CES 2019

Before I came to CES, my friends and I were watching football on a large HDTV and joking about how nice the picture was. “They just need to stop trying to make screens look better.” one friend quipped. “I think they’ve done it. Good job! Time to move on!” We all agreed that an engineer’s time would be better devoted to other pursuits in our world of ultra-HD. What my friends and I did not understand a week ago was that the future of displays isn’t going to be so much about the picture quality - it’s going to be about the versatility of the display.

The company Royole made headlines on opening day of CES with their flexible screens, which they displayed in a variety of use cases. The flexible displays, invented by their CEO while he was a student at Stanford, have great definition even as they wrap and fold. They offer solutions for foldable phones, car dashboards, accessories and wearables. What most impressed me was to see one of these displays blowing in the wind like a sheet hung out to dry, all the while delivering hi-definition video as it flapped in the breeze. At only about 0.01 mm thick, the displays are “less than one fifth the diameter of the human hair.” So, any thinner and you would be watching a screen floating on thin air.

Takeaways from CES Day 2:

There was nothing sinister about the Robotics and AI at CES. Like Rosie from The Jetsons or Star Wars’ C3P0, they are designed to be friendly and helpful in a variety of ways. Some robots might keep your pet entertained when you are not at home. Others, like John Deere’s connected combine harvester, might tend to acres of farmland while farmers monitor it remotely on their smartphone.

Tracking and understanding human movement are starting to make an impact. Adding biometrics to wearable devices is opening the doors to better safety and training methods. Combining AI with motion tracking and facial recognition has many use cases, from making our streets safer to saving elephants from poachers in Africa.

Displays are getting thinner to the point of being flexible, but they’re not going to vanish any time soon. Or perhaps they will become so thin they will disappear, and we’ll be watching HD videos floating on air. It seems we are not too far from that reality.

Stay tuned for part 3 of our series, or if you missed our recap of Day 1.